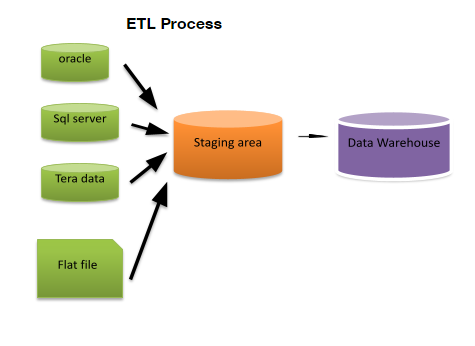

ETL (Extract, Transform, and Load) extracts the data from different source systems then transforms the data and loads the data into data warehouse system.

ETL Testing:

ETL testing is done before data is moved into a production data ware house system. It is sometimes also called table balancing or production reconciliation. The main goal of ETL testing is to identify and mitigate data defects and general errors that occur prior to processing of data for analytical reporting.

ETL Testing tasks performed are

- Understanding the data to be used for reporting.

- Review data Model

- Source to target mapping

- Data checks on source data

- Packages and schema validation

- Data integrity and quality checks in the target system.

- Performance testing data.

ETL testing involves the operations like:

- Validating the data movement from the source to the target system

- checking of data count in the source and the target system.

- validating data extraction, transformation as per requirement and expectations

- Verifying the table relations like joins and keys are preserved during the transformation

We need ETL because it helps companies to analyse their business data for taking many business decisions. A data warehouse provides a common data repository. ETL provides a method of moving the data from many different sources into data warehouse. As data sources will change the data warehouse will automatically update. ETL process accepts the sample data comparison between the source and the target system. ETL process can perform complex transformation and also needs extra area to store the data. ETL is a predefined process for accessing and manipulating source data into the target database.ETL offers deep historical context for the business and it also helps in improving the quality because it codifies and reuses without a need for technical skills.

It consists of three processes

- Extraction

- Transform

- Load

1. Extraction

Here data is taken from the source system into the staging area.staging area gives an opportunity to validate extracted data before it moves into the datawarehouse. There are three data extraction methods.

- Full extraction

- Partial extraction without update notification

- Partial Extraction with update notification

The extraction should not affect the performance and response time of the source systems irrespective of any method used. These source system are live production databases. The validations done during the extraction are:

- Reconcile records with sources data

- Make sure that no spam data loaded

- Data type check

- Remove all types of duplicate data

2. Transform

Data extracted from source server is raw and not usable in its original form. Therefore it needs to be cleansed, mapped and transformed. In transformation step, we can also do customised operations on data like the user wants sum of sales revenue which is not in the database. Validations done during this stage are filtering using rules and lookup tables for data standardisation, character set conversion and encoding handling, use lookups to merge data, using any complex data validation.

3. Loading

Loading data into the target data warehouse database is the last step of ETL process. Huge volume of data needs to be loaded in a relatively short period. Load process should be optimized for performance.

Types of loading:

- Initial load-populating all data warehouse tables

- Incremental Load-applying ongoing changes as when needed periodically.

- Full refresh-erasing the contents of one or more tables and reloading with fresh data.

The different examples ETL tools are Markogic, Oracle, Amazon Redshift.

13 Responses

ETL testing is done before data is moved into a production data ware house system.

The main goal of ETL testing is to identify and mitigate data defects and general errors that occur prior to processing of data for analytical reporting.

ETL testing involves the operations like:

1.Validating the data movement from the source to the target system

2. Checking of data count in the source and the target system.

3.validating data extraction, transformation as per requirement and expectations

4.Verifying the table relations like joins and keys are preserved during the transformation

ETL consists of three processes:

1. Extract

2. Transform

3. Load

ETL extracts the data from different source systems then transforms the data and loads the data into data warehouse system.

ETL testing is done before data is moved into a production data ware house system. The main goal of ETL testing is to identify and mitigate data defects and general errors that occur prior to processing of data for analytical reporting.

A data warehouse provides a common data repository. ETL provides a method of moving the data from many different sources into data warehouse. As data sources will change the data warehouse will automatically update. ETL process accepts the sample data comparison between the source and the target system. ETL process can perform complex transformation and also needs extra area to store the data. ETL is a predefined process for accessing and manipulating source data into the target database. ETL offers deep historical context for the business and it also helps in improving the quality because it codifies and reuses without a need for technical skills. This consists of three processes Extraction,Transform,Load

ETL Testing tasks performed are:

*Understanding the data to be used for reporting.

*Review data Model

*Source to target mapping

*Data checks on source data

*Packages and schema validation

*Data integrity and quality checks in the target system.

*Performance testing data.

ETL testing involves the operations like:

*Validating the data movement from the source to the target system

*checking of data count in the source and the target system.

*validating data extraction, transformation as per requirement and expectations

*Verifying the table relations like joins and keys are preserved during the transformation

ETL (Extract, Transform, and Load) extracts the data from different source systems then transforms the data and loads the data into data warehouse system.

ETL Testing tasks performed are

• Understanding the data to be used for reporting.

• Review data Model

• Source to target mapping

• Data checks on source data

• Packages and schema validation

• Data integrity and quality checks in the target system.

• Performance testing data.

ETL testing involves the operations like:

1. Validating the data movement from the source to the target system

2. checking of data count in the source and the target system.

3. validating data extraction, transformation as per requirement and expectations

4. Verifying the table relations like joins and keys are preserved during the transformation

ETL Testing

Extract, Transform, and Load (ETL) extracts the data from different source systems then transforms the data and loads the data into data warehouse system. It is done before data is moved into a production data ware house system and sometimes also called table balancing or production reconciliation. The main goal of ETL testing is to identify and mitigate data defects and general errors that occur prior to processing of data for analytical reporting. It helps companies to analyse their business data for taking many business decisions.

ETL testing involves the operations like:

– Validating the data movement from the source to the target system

– Checking of data count in the source and the target system

– Validating data extraction, transformation as per requirement and expectations

– Verifying the table relations like joins and keys are preserved during the transformation

ETL testing consists of three processes:

1. Extraction

2. Transform

3. Loading

ETL Testing

ETL (Extract, Transform, and Load) extracts the data from different source systems then transforms the data and loads the data into data warehouse system.

he main goal of ETL testing is to identify and mitigate data defects and general errors that occur prior to processing of data for analytical reporting.

ETL Testing tasks performed are:

1)Understanding the data to be used for reporting.

2)Review data Model

3)Source to target mapping

4)Data checks on source data

5)Packages and schema validation

6)Data integrity and quality checks in the target system.

7)Performance testing data.

ETL testing involves the operations like:

1)Validating the data movement from the source to the target system

2)checking of data count in the source and the target system.

3)validating data extraction, transformation as per requirement and expectations

4)Verifying the table relations like joins and keys are preserved during the transformation

ETL Testing consists of three processes:

1)Extraction

2)Transform

3)Load

ETL extracts the data from different source systems then transforms the data and loads the data into data warehouse system.

ETL testing is done before data is moved into a production data ware house system. The main goal of ETL testing is to identify and mitigate data defects and general errors that occur prior to processing of data for analytical reporting.

ETL Testing tasks performed are:

1)Understanding the data to be used for reporting.

2)Review data Model

3)Source to target mapping

4)Data checks on source data

5)Packages and schema validation

6)Data integrity and quality checks in the target system.

7)Performance testing data.

ETL testing involves the operations like:

1)Validating the data movement from the source to the target system

2)checking of data count in the source and the target system.

3)validating data extraction, transformation as per requirement and expectations.

4)Verifying the table relations like joins and keys are preserved during the transformation.

ETL Testing consists of three processes:

1)Extraction

2)Transform

3)Loading

ETL (Extract, Transform, and Load)

It extracts the data from different source systems then transforms the data and loads the data into data warehouse system.

Tasks of ETL

-Understanding the data to be used for reporting.

-Review data Model

-Source to target mapping

-Data checks on source data

-Packages and schema validation

-Data integrity and quality checks in the target system.

-Performance testing data.

ETL testing involves the operations like:

-Validating the data movement from the source to the target system

-checking of data count in the source and the target system.

-validating data extraction, transformation as per requirement and expectations

-Verifying the table relations like joins and keys are preserved during the transformation

It consists of three processes : Extraction ,Transform ,Load

1. Extraction:

Here data is taken from the source system into the staging area.staging area gives an opportunity to validate extracted data before it moves into the datawarehouse. There are three data extraction methods.

1.Full extraction

2.Partial extraction without update notification

3.Partial Extraction with update notification

The extraction should not affect the performance and response time of the source systems irrespective of any method used.The validations done during the extraction are:

a.Reconcile records with sources data

b.Make sure that no spam data loaded

c.Data type check

d.Remove all types of duplicate data

2. Transform:

Data extracted from source server is raw and not usable in its original form. Therefore it needs to be cleansed, mapped and transformed. In transformation step, we can also do customised operations on data like the user wants sum of sales revenue which is not in the database.

3. Loading:

Loading data into the target data warehouse database is the last step of ETL process. Huge volume of data needs to be loaded in a relatively short period. Load process should be optimized for performance

ETL testing

ETL means Extract Transform Load

It extracts the data from different source systems like oracle ,SQL flat file, tera data. Then it transforms data into different data types and then it loads or stores into one storage location as dataware house.

ETL performs various tasks :

1. Validation of data

2. Checking of data

3. Validating data extraction and transform as per requirement

4. Verifying data

ETL testing is done before data is moved into a production data ware house system.

The main goal of ETL testing is to identify and mitigate data defects and general errors that occur prior to processing of data for analytical reporting.

ETL testing involves the operations like:

1.Validating the data movement from the source to the target system

2. Checking of data count in the source and the target system.

3.validating data extraction, transformation as per requirement and expectations

4.Verifying the table relations like joins and keys are preserved during the transformation

ETL consists of three processes:

1. Extract:

There are three data extraction methods.

Full extraction

Partial extraction without update notification

Partial Extraction with update notification

The extraction should not affect the performance and response time of the source systems irrespective of any method used. These source system are live production databases. The validations done during the extraction are:

Reconcile records with sources data

Make sure that no spam data loaded

Data type check

Remove all types of duplicate data

2. Transform:

Data extracted from source server is raw and not usable in its original form. Therefore it needs to be cleansed, mapped and transformed. In transformation step, we can also do customised operations on data like the user wants sum of sales revenue which is not in the database. Validations done during this stage are filtering using rules and lookup tables for data standardisation, character set conversion and encoding handling, use lookups to merge data, using any complex data validation.

3. Load—— Types of loading:

Initial load-populating all data warehouse tables

Incremental Load-applying ongoing changes as when needed periodically.

Full refresh-erasing the contents of one or more tables and reloading with fresh data.

ETL testing is done before data is moved into a production data ware house system.

The main goal of ETL testing is to identify and mitigate data defects and general errors that occur prior to processing of data for analytical reporting.

ETL testing involves the operations like:

1.Validating the data movement from the source to the target system

2. Checking of data count in the source and the target system.

3.validating data extraction, transformation as per requirement and expectations

4.Verifying the table relations like joins and keys are preserved during the transformation

ETL consists of three processes:

1. Extract

2. Transform

3. Load

ETL (Extract, Transform, and Load) extracts the data from different source systems then transforms the data and loads the data into data warehouse system. ETL testing is done before data is moved into a production data ware house system. The main goal of ETL testing is to identify and mitigate data defects and general errors that occur prior to processing of data for analytical reporting.

ETL testing tasks performed are

-understanding the data to be used for reporting

-review data model

-source to target mapping

-data checks on source data

-packages and schema validation

-data integrity and quality checks in the target system.

-performance testing data

ETL testing involves the operations like:

1.validating the data movement from the source to the target system.

2.checking the data count in the source and the target system.

3.validating data extraction, transformation as per requirement and expectations

4.verifying the table relations like joins and keys are preserved during the transformation.

It consists of three processes

1.extraction

2.transform

3. load