Introduction:

In a world driven by software, reliability is no longer a luxury it’s a necessity. Whether it’s the autopilot system in an aircraft or the control software in medical devices, a single bug can have catastrophic consequences. That’s where Formal Verification Testing comes into play.

Unlike traditional software testing methods, which involve running programs and checking outputs, Formal Verification Testing mathematically proves the correctness of a system. It has gained momentum among those pursuing advanced QA IT training or enrolling in Quality assurance courses online, especially in industries where safety and precision are paramount.

This guide will walk you through the fundamentals of formal verification, its significance in QA, real-world applications, and how you can master it through testing courses online.

What Is Formal Verification Testing?

A Mathematical Approach to Software Assurance

Formal Verification Testing is the process of using mathematical techniques to verify that a software program behaves correctly with respect to a formal specification or model. It ensures logical correctness rather than empirical correctness derived from test cases.

While traditional QA testing might cover 80–90% of possible scenarios, formal verification targets 100% logical coverage, including edge cases that may never be triggered in standard tests.

How It Works

- Formal Specification: Define the desired behavior of the system using mathematical logic (e.g., temporal logic).

- Modeling: Abstract the system to a simplified but logically equivalent model.

- Verification Tools: Use tools like model checkers or theorem provers to validate that the model meets the specification.

Why Formal Verification Matters in QA Testing

1. Guarantees Error-Free Code (Mathematically Proven)

No matter how robust your traditional QA testing is, it can never guarantee zero bugs. Formal verification, however, can. It provides mathematical guarantees that the software will not exhibit certain classes of errors.

This is a core reason why top-tier QA testing online training modules include a section on formal methods, particularly for roles in mission-critical systems.

2. Reduces Long-Term Costs

While the upfront investment in formal methods is higher due to complexity and time, it significantly reduces post-deployment failures, leading to lower maintenance and support costs.

3. Complements Traditional QA Techniques

Formal verification doesn’t replace functional or performance testing but enhances them. It’s ideal for verifying:

- Algorithms

- Communication protocols

- Security policies

- Hardware design (chip verification)

If you’re exploring quality assurance courses online, look for ones that introduce formal logic and its integration with manual and automated testing.

Key Components of Formal Verification

1. Specifications

Specifications are written using mathematical languages, such as:

- Z Notation

- Temporal Logic

- Alloy

- VDM (Vienna Development Method)

These are formal representations of what a program should do.

2. Modeling

The next step is creating a model of the system. Think of it as a high-level abstraction capturing all possible states and transitions of the program.

3. Verification Tools

Common tools used include:

- SPIN: For model checking of distributed systems.

- Coq and Isabelle: Theorem provers used in academia and research.

- Frama-C: Used for C code analysis.

- NuSMV: Symbolic model checking tool.

- ACL2: For logic and reasoning in industrial projects.

Modern QA IT training often includes labs with these tools, especially for learners targeting sectors like aerospace or healthcare.

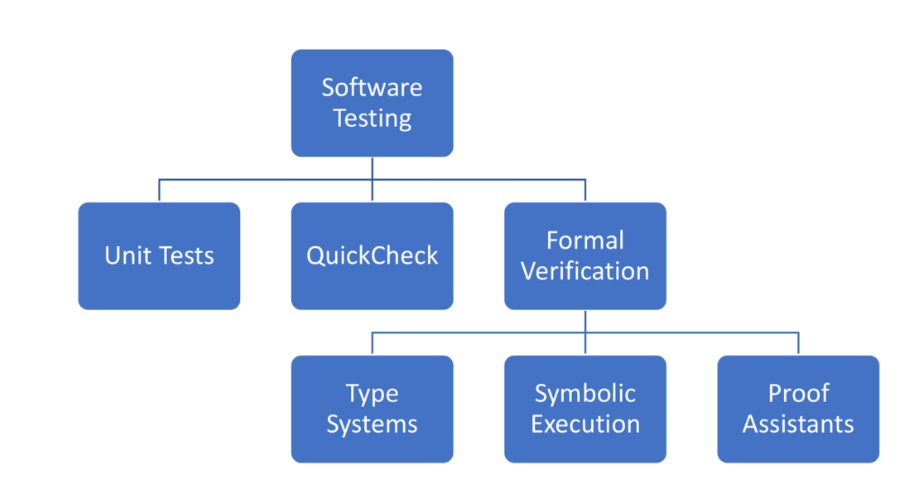

Types of Formal Verification Techniques

1. Model Checking

A fully automated technique that explores all possible system states to ensure it satisfies a given property.

Best for: Finite state systems, communication protocols, safety verification.

2. Theorem Proving

A semi-automated approach that involves writing logical proofs using formal rules.

Best for: Complex algorithms, mathematical software, cryptography.

3. Abstract Interpretation

A method that analyzes the software without executing it, to find potential bugs and ensure code correctness.

Best for: Static analysis, detecting undefined behaviors, memory errors.

Real-World Applications of Formal Verification

1. Aerospace and Avionics

NASA and Boeing use formal verification to validate flight control systems. A single software failure can lead to life-threatening disasters.

2. Automotive Industry

Autonomous vehicle systems like braking and steering control rely on mathematically verified models for safety compliance.

3. Medical Devices

Pacemakers, insulin pumps, and surgical robots use embedded systems that undergo formal testing to meet FDA safety regulations.

4. Cybersecurity

Formal verification is employed to verify encryption algorithms and security protocols to ensure there are no vulnerabilities or logic flaws.

5. Finance and Blockchain

Smart contracts are formally verified to avoid multi-million-dollar bugs. Ethereum-based projects often use formal methods before deployment.

These examples are increasingly integrated into QA testing online training, preparing learners to work in high-stakes environments.

Challenges in Formal Verification Testing

1. Steep Learning Curve

Understanding formal languages and proof systems requires a solid grasp of mathematics, logic, and theoretical computer science. However, modern QA IT training breaks these concepts down into digestible modules.

2. Scalability

Formal methods are resource-intensive and may not scale well to extremely large systems. This is being addressed through modular verification.

3. Tool Complexity

Many verification tools require knowledge of specialized languages and configuration. Nonetheless, online platforms offering testing courses online now include walkthroughs and templates to ease the learning curve.

Formal Verification vs. Traditional Testing

| Feature | Traditional Testing | Formal Verification |

|---|---|---|

| Basis | Empirical (Test cases) | Mathematical (Formal proofs) |

| Coverage | Partial (based on test cases) | Exhaustive (state-space analysis) |

| Automation | Highly automated | Semi/fully automated |

| Human Dependency | Manual test case design | Requires formal model writing |

| Application Areas | General-purpose software | Safety/mission-critical systems |

| Learning Availability | Widely available QA courses | Advanced QA and CS courses |

For learners pursuing quality assurance courses online, combining both methods yields the best outcomes.

How to Learn Formal Verification

1. Enroll in Testing Courses Online

Many platforms now offer QA testing online training that includes introductory modules on formal methods, model checking, and logic-based testing.

2. Study Formal Methods Tools

Explore tools like SPIN, Alloy, and Frama-C through open-source communities. Most courses also provide tool-based assignments.

3. Understand Formal Languages

Gain comfort with specification languages. Some QA IT training programs introduce simplified logic systems to make it easier for non-mathematicians.

4. Apply to Projects

Practice verifying small systems such as a login protocol, banking algorithm, or traffic light controller.

5. Certification

Look for QA certifications that include formal verification as a specialization. These are especially valuable for industries requiring regulatory compliance.

Formal Verification in QA Career Paths

Incorporating formal verification knowledge into your QA skillset can open doors to specialized roles, including:

- Software Verification Engineer

- Safety & Compliance QA Analyst

- Formal Methods Specialist

- Embedded Systems QA Engineer

- Security Software QA

Employers in sectors like defense, finance, healthcare, and AI development often seek candidates with formal methods expertise skills often nurtured through QA IT training and testing courses online.

Final Thoughts: Why Formal Verification Is Worth Learning

In today’s competitive tech landscape, mastering traditional QA methods alone is not enough. Formal verification offers a mathematical edge that ensures software works as intended in every possible scenario.

Whether you’re a QA beginner or a seasoned professional, embracing this technique through quality assurance courses online or QA testing online training will set you apart.

You don’t have to become a mathematician to use formal verification effectively. With the right tools, guided training, and practice, anyone in QA can integrate these principles into their workflow improving software quality, reducing bugs, and enhancing user trust.

Key Takeaways

- Formal Verification Testing is a mathematical technique for ensuring software correctness.

- It is ideal for mission-critical systems and complements traditional testing methods.

- Popular tools include SPIN, Coq, and Frama-C.

- You can learn formal verification through QA IT training, online tutorials, and hands-on projects.

- QA professionals with formal verification expertise are in high demand across industries like aerospace, healthcare, and finance.

Call to Action

Want to gain advanced QA skills and master formal verification techniques?

Explore top-rated testing courses online and QA testing online training programs that cover formal methods, model checking, and industry tools. Your journey to becoming a high-impact QA professional starts today.

13 Responses

Formal Verification Testing

The Formal verification testing is applied to verify the system make sure that the software satisfies the requirements. These formal verification techniques are applicable mainly at the levels of software development like design and development stages. For eg: Formal verification is logical equivalence checking, which verifies the logical equivalence of RTL, gate, transistor-level netlists. It has a faster verification cycle for the technical design specification. Only generates functional vectors for simulation to spot the bugs.

Examples of formal verification methods are deductive verifications, modeling tools, simulators, static analysis, etc.

Formal verification is the process of checking whether a design satisfies some requirements. The formal techniques are applied to verify the system. These formal verification techniques are applicable mainly at the levels of software development like design and development stages.

The Formal verification is make sure if the software satisfies the requirements that just not limited to the IT industry, but also applicable to electronics and embedded systems as well. The formal techniques are applied to verify the system. These formal verification techniques are applicable mainly at the levels of software development like design and development stages. It is basically used to eliminate the chance of certain sorts of bugs in an exceedingly narrow layer of the system, the one which is being verified. The types of requirements or specifications that can be verified are functional, protocols, real-time applications, memory, security, the robustness of the software. Examples of formal verification methods are deductive verifications, modeling tools, simulators and static analysis.

Formal verification is the process of checking whether a design satisfies some requirements (properties). These formal verification techniques are applicable mainly at the levels of software development like design and development stages.

Formal verification is to identify errors in the model and generate test vectors that reproduce errors in simulation. Unlike traditional testing methods in which expected results are expressed with concrete data values, formal verification techniques let you work on models of system behavior. The basic observation of formal verification is to eliminate the chance of certain sorts of bugs in an exceedingly narrow layer of the system, the one which is being verified.

formal verification techniques are applicable mainly at the levels of software development like design and development stages The basic observation of formal verification is to eliminate the chance of certain sorts of bugs in an exceedingly narrow layer of the system. The types of requirements or specifications that can be verified are functional, protocols, real-time applications, memory, security, the robustness of the software.

Formal Verification helps to analyze deep properties of the code to prove nothing is lost in translation from specification to code and the software does only what it is intended to do. Formal Verification allows us to evaluate all possible scenarios all at once and as a result we eliminate entire classes of flaws dramatically improving the safety and security of our systems. Formal verification also helps in greatly reducing costs by formally verifying both their design and implementation.

These formal verification techniques are applicable mainly at the levels of software development like design and development stages.Formal verification testing is something like using mathematical techniques to convincingly argue that a chunk of software implements a specification.

it is performed using mathematical technique to check whether the algorithm of application is built according to requirement specification.

formal verification testing is to prove that software meets its requirements . It is done using mathematical technique to prove some of the software chunks implements the specification.

the formal verificationis to prove if the softwaresatisfies the requirments.this is not just limited to the IT industry but also applicable to electronics and embedded systems as well, These formal verification techniques are applicable mainly at the levels of software development like design and development stages.For eg: Formal verification is logical equivalence checking, which verifies the logical equivalence of RTL, gate, transistor-level netlists. It has a faster verification cycle for the technical design specification. Only generates functional vectors for simulation to spot the bugs.

Formal verification testing is something like using mathematical techniques to convincingly argue that a chunk of software implements a specification. The specification is also very domain-specific as a robot might not injure an individual or through inaction allow a person to harm or it should be generic as in “no execution of system dereferences a null pointer”. So far we all know extremely little about what could be included in verifying the specifications that identify entities on the planet, as against entities inside the software applications.

This blog post on formal verification testing is incredibly insightful! It does a great job of breaking down the complexities of the verification process and highlighting its importance in ensuring reliable and error-free designs. A must-read for anyone in the VLSI field.