Web scraping, as the name suggests, is a technology used to automatically extract data from websites. It involves processing the HTML of a web page to pull valuable information, which is then converted into another format like a spreadsheet or database entry for further analysis and storage. This automated process allows users to gather large amounts of data efficiently, making it ideal for applications where data retrieval and organization are essential.

While Selenium is widely recognized as a tool for automation testing, it also offers other practical applications. One of these notable uses is in web scraping, where Selenium’s ability to interact dynamically with web content provides a unique advantage. In this article, we’ll explore whether Selenium is the right tool for your web scraping needs or if alternatives like Beautiful Soup might be better suited.

To start, let’s delve into the benefits and limitations of using Selenium for web scraping and compare its performance with other popular tools.

Benefits of Using Selenium for Web Scraping

Since Selenium WebDriver operates an actual web browser to access a website, its behavior closely resembles that of a human browsing session or a bot. When WebDriver navigates to a webpage, the browser loads all the site’s resources JavaScript files, images, CSS styles, and other assets just as it would during normal user interaction.

This approach allows WebDriver to handle dynamic content and interactive elements, as it waits for JavaScript functions to execute and for elements to fully render on the page, ensuring accurate data extraction from complex sites.

This full-page loading process is particularly useful when scraping data from websites that rely heavily on JavaScript for dynamic content updates, as WebDriver can seamlessly access information that static scrapers might miss. However, this comprehensive loading process also makes WebDriver somewhat resource-intensive, potentially slowing down the scraping process compared to lighter tools that don’t render full pages.

Nonetheless, WebDriver’s ability to mimic human browsing remains a powerful asset when interacting with websites that require complex navigation, user input, or scripted behaviors, offering a high level of flexibility for advanced web scraping tasks.

It also stores all the cookies created by your websites. This makes it very difficult to determine whether a real person or a robot has accessed the website. With Webdriver this is possible in a few simple steps, however, it is really difficult to emulate all these tasks in a program that sends handcrafted HTTP requests to the server.

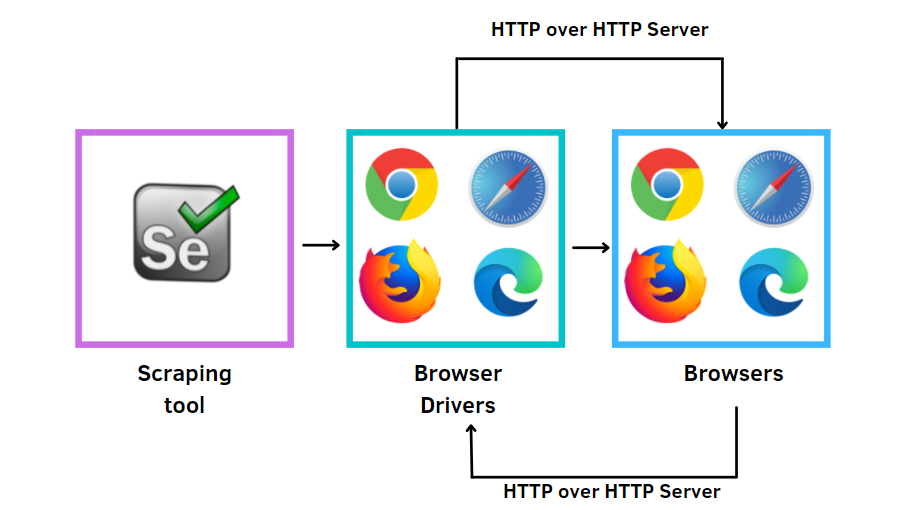

To extract data from these browsers, Selenium provides a module called WebDriver, which is useful for performing various tasks such as automated testing, cookie retrieval, screenshot retrieval, and much more. Some common Selenium use cases for web scraping are form submission, auto-login, data addition and deletion, and alert handling. For more information about how to use Selenium testing for web scraping, there are many courses on Selenium training online that you can enroll in for your benefit.

However, web scraping is considered to be the most reliable and efficient data acquisition method among all these methods. Web scraping, also known as the extraction of web data, is an automated process of scraping big data from websites.

Drawbacks of using Selenium for Web Scraping

As much as there are advantages to using selenium for web scraping, there must be some drawbacks. Let’s see a few of these drawbacks below.

- A large network traffic generated: Web browsers download many supplementary files that are of no value to you (such as CSS, JS, and image files). If you only request resources you really need (with different HTTP requirements) this can generate a lot of traffic.

- Scraping is easily detected with simple methods like Google Analytics: One of the drawbacks of using selenium for web scraping is, if you explore a lot of pages with Webdriver, you can easily look into JavaScript-based tracking tools (such as Google Analytics). The site owner doesn’t even need to install a sophisticated scraping detection mechanism!

- Time and Resources Consumption: When you use WebDriver to scrape web pages you load the entire web browser into the system memory. Not only does this take time and consume system resources, but it can also cause your security subsystem to overreact (and even not allow your program to run).

- Slow Scraping Process: Since a browser waits for the entire web page to load, and only then allows you to access its elements, the scraping process can take longer than making a simple HTTP request to the webserver.

Types of Web Scraping with Selenium

There are two types of webscraping with Selenium:

- Static web scraping

- Dynamic web scraping

Static and dynamic websites differ fundamentally in how they deliver content to users. On static websites, the content is fixed and remains the same for every visitor until it is manually updated by the site owner or developer. These pages are straightforward, composed of simple HTML and CSS, providing a consistent experience without relying on user input or backend processing.

In contrast, dynamic websites serve content that can change in response to user actions, location, or other data inputs.

These sites often use databases and server-side scripting to personalize the experience, allowing content to adjust in real-time for each visitor. This adaptability, while enhancing user engagement, also means that dynamic sites require more complex processing, especially when scraping, as they frequently rely on JavaScript to load and display new content elements.

For example, it can be changed according to the user profile. This increases its time complexity as a dynamic website on the client side can process a static page on the server-side while on the client side.

The content of the static website is downloaded locally, and the corresponding scripts are used to collect the data. In contrast, dynamic website content is generated only for any number of requests during the initial load request.

In order to delete the data on the website, Selenium provides some standard locators which help in locating the content of the test page. Locators are nothing more than keywords associated with HTML pages. For further details on why you should use or how to use selenium for web scraping, you can do yourself some good by getting certification on an online selenium training course to widen your horizon.

Wrapping up

The benefits and drawbacks of using Selenium for web scraping are not limited to those discussed here, as Selenium’s capabilities extend far beyond scraping and are primarily designed for web application testing. However, many web scraping experts recognize Selenium as a powerful tool, especially for scenarios requiring interaction with dynamic content, such as clicking buttons, handling pop-ups, or navigating through multiple pages.

Selenium’s flexibility also makes it easy to integrate with almost any web scraping solution written in popular programming languages, including Java, C#, Ruby, Python, JavaScript, or PHP, allowing developers and data enthusiasts to tailor it to meet their specific project needs.

We hope this article provides you with valuable insights to make an informed decision about whether Selenium is the right choice for your web scraping tasks.

While it may not be the most efficient option for large-scale or static data scraping, its versatility in handling complex, interactive elements on web pages can make it a strong candidate for specific scraping applications. By understanding both its strengths and limitations, you’ll be well-equipped to harness Selenium effectively, choosing the best approach to meet your data extraction goals.

Conclusion

In conclusion, while Selenium can be a powerful tool for web scraping due to its ability to interact dynamically with web pages, it’s not always the most efficient choice for all scraping projects. Selenium shines when dealing with websites that require log in, scrolling, or other interactions, making it ideal for more complex tasks that static scrapers struggle with. However, for simpler, static data extraction, other tools may offer faster, more resource-efficient options.

Ultimately, choosing Selenium for web scraping depends on the specific requirements of your project. If you need real-time interaction with a website’s dynamic content, Selenium’s capabilities may outweigh the performance trade-offs. For basic, large-scale data extraction, though, exploring lighter alternatives could be beneficial. In either case, understanding the scope and demands of your scraping task will help you make the most effective decision.

Call to Action

Ready to make the most of your web scraping projects? Join our Selenium for Web Scraping course at H2K Infosys and dive into a comprehensive, hands-on experience tailored for dynamic data extraction. With Selenium’s powerful capabilities, you’ll learn how to navigate websites that require real-time interaction, handle complex HTML structures, and extract valuable data efficiently. This Selenium certification course is designed to equip you with the skills to confidently tackle even the most complex scraping challenges while expanding your automation expertise.

Whether you’re a beginner or looking to refine your skills, H2K Infosys provides the expert guidance and practical knowledge you need to succeed. Enroll today to unlock new career opportunities in data extraction and automation, and take your capabilities to the next level. Don’t miss this chance to master web scraping with Selenium and become a valuable asset in the fast-evolving world of data and technology!