In this article, you will learn an introduction to Hadoop and how this open-source framework utilized to create data processing using a simple programming model

What is Hadoop?

Apache Hadoop is an open-source framework utilized to create data processing applications using a simple programming model which are executed in a distributed computing environment.

It is an open-source Data management with distributing processing and scale-out storage.

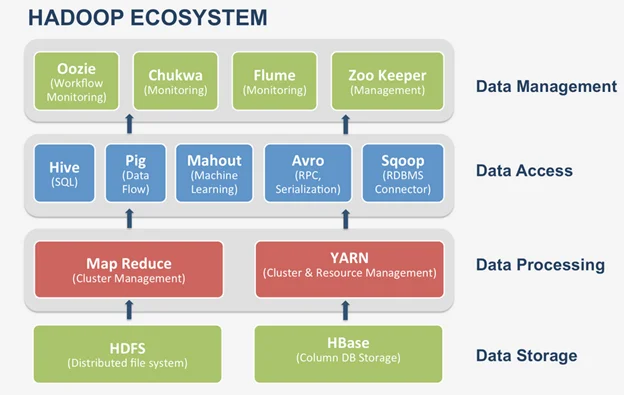

Hadoop ecosystem and components

In this introduction to Hadoop Framework’s success has pushed developers to create multiple software. Hadoop, along with these, a set of related software makes up the Hadoop EcoSystem. The primary purpose of this software is to enhance functionality and increase the efficiency of the Hadoop Framework.

The Hadoop EcoSystem Comprises of

- Apache PIG

- Apache HBase

- Apache Hive

- Apache Sqoop

- Apache Flume

- Apache Zookeeper

- Hadoop Distributed File System

- MapReduce

Apache Pig

Apache Pig is a scripting language, applied to write, data analysis programs for big datasets that are present within the Hadoop Cluster. This scripting language is called Pig Latin.

Apache HBase

Apache HBase is a column-oriented database that enables real-time reading and writing of data onto the HDFS. Apache Hive is a language like SQL, which allows querying of data from HDFS. The SQL version of Hive is called HiveQL.

Apache Sqoop

Sqoop word is the combination of SQL and Hadoop. Apache Sqoop is an application, used to transfer the data to or from Hadoop to any Relational Database Management System.

Apache Flume

Apache Flume is an application that allows moving streaming data into a Hadoop Cluster. An excellent example of streaming data would be, the data that is being written to the log files.

Apache Zookeeper

And finally, the Apache Zookeeper takes care of all the coordination required among this software to function correctly.

Hadoop MapReduce

MapReduce is a computational model and framework for creating applications that can run on Hadoop. These MapReduce applications can process gigantic data in parallel on large clusters.

HDFS

HDFS stands for the Hadoop Distributed File system. HDFS controls the storage side of Hadoop applications. MapReduce applications use data from HDFS. HDFS creates many copies of data and shares them on different nodes in a cluster. This distribution allows safe and remarkably rapid computations.

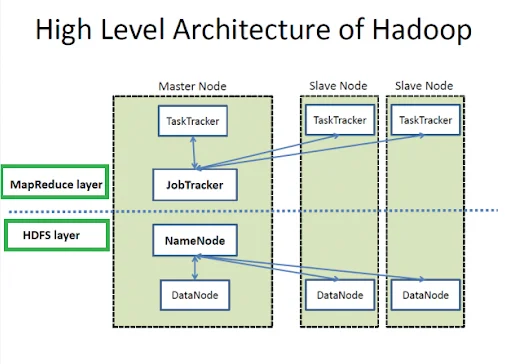

Hadoop Architecture

Hadoop follows Master-Slave Architecture for distributed data processing and data storage. A Hadoop cluster is made up of an individual master and various slave nodes.

NameNode:

NameNode represents all files and directories that are managed in the namespace. Namenode maintains the file system by performing an operation like the renaming, opening, and closing the files.

DataNode:

DataNode assists in managing the state of an HDFS node and lets you interact with the blocks. The HDFS cluster contains multiple DataNodes. DataNode’s responsibility is to read and write calls from the file system’s clients.

MasterNode:

The master node enables you to execute the parallel processing of data using Hadoop MapReduce. The master node comprises of Task Tracker, Job Tracker, DataNode, and NameNode.

Slave node:

Complex calculations are carried out using some additional machines in the Hadoop cluster. These individual machines are called slave nodes. Slave node consists of Task Tracker and a DataNode enabling you to carry out synchronization of the processes with the NameNode and Job Tracker.